This is a guest post by John Cook, The University of Queensland.

Science denial has real, societal consequences. Denial of the link between HIV and AIDS led to more than 330,000 premature deaths in South Africa. Denial of the link between smoking and cancer has caused millions of premature deaths. Thanks to vaccination denial, preventable diseases are making a comeback.

Denial is not something we can ignore or, well, deny. So what does scientific research say is the most effective response? Common wisdom says that communicating more science should be the solution. But a growing body of evidence indicates that this approach can actually backfire, reinforcing people’s prior beliefs.

When you present evidence that threatens a person’s worldview, it can actually strengthen their beliefs. This is called the “worldview backfire effect”. One of the first scientific experiments that observed this effect dates back to 1975.

A psychologist from the University of Kansas presented evidence to teenage Christians that Jesus Christ did not come back from the dead. Now, the evidence wasn’t genuine; it was created for the experiment to see how the participants would react.

What happened was their faith actually strengthened in response to evidence challenging their faith. This type of reaction happens across a range of issues. When US Republicans are given evidence of no weapons of mass destruction in Iraq, they believe more strongly that there were weapons of mass destruction in Iraq. When you debunk the myth linking vaccination to autism, anti-vaxxers respond by opposing vaccination more strongly.

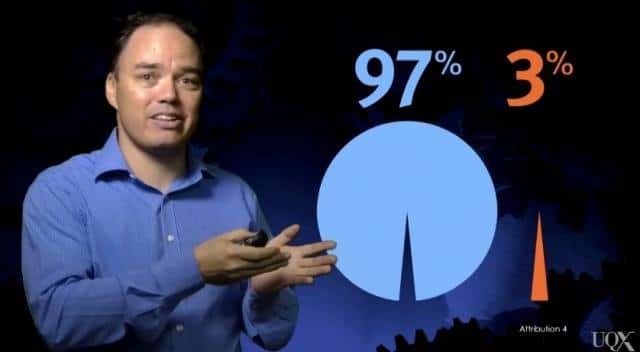

In my own research, when I’ve informed strong political conservatives that there’s a scientific consensus that humans are causing global warming, they become less accepting that humans are causing climate change.

Brute force meets resistance

Ironically, the practice of throwing more science at science denial ignores the social science research into denial. You can’t adequately address this issue without considering the root cause: personal beliefs and ideology driving the rejection of scientific evidence. Attempts at science communication that ignore the potent influence effect of worldview can be futile or even counterproductive.

How then should scientists respond to science denial? The answer lies in a branch of psychology dating back to the 1960s known as “inoculation theory”. Inoculation is an idea that changed history: stop a virus from spreading by exposing people to a weak form of the virus. This simple concept has saved millions of lives.

In the psychological domain, inoculation theory applies the concept of inoculation to knowledge. When we teach science, we typically restrict ourselves to just explaining the science. This is like giving people vitamins. We’re providing the information required for a healthier understanding. But vitamins don’t necessarily grant immunity against a virus.

There is a similar dynamic with misinformation. You might have a healthy understanding of the science. But if you encounter a myth that distorts the science, you’re confronted with a conflict between the science and the myth. If you don’t understand the technique used to distort the science, you have no way to resolve that conflict.

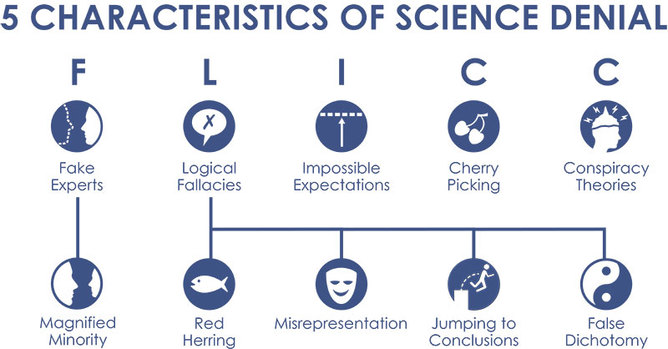

Half a century of research into inoculation theory has found that the way to neutralise misinformation is to expose people to a weak form of the misinformation. The way to achieve this is to explain the fallacy employed by the myth. Once people understand the techniques used to distort the science, they can reconcile the myth with the fact.

Skeptical Science

There is perhaps no more apt way to demonstrate inoculation theory than to address a myth about vaccination. A persistent myth about vaccination is that it causes autism.

This myth originated from a Lancet study which was subsequently shown to be fraudulent and was retracted by the journal. Nevertheless, the myth persists simply due to the persuasive fact that some children have developed autism around the same time they were vaccinated.

This myth uses the logical fallacy of post hoc, ergo propter hoc, Latin for “after this, therefore because of this”. This is a fallacy because correlation does not imply causation. Just because one event happens around the same time as another event doesn’t imply that one causes the other.

The only way to demonstrate causation is through statistically rigorous scientific research. Many studies have investigated this issue and shown conclusively that there is no link between vaccination and autism.

Inoculating minds

The response to science denial is not just more science. We stop science denial by exposing people to a weak form of science denial. We need to inoculate minds against misinformation.

The practical application of inoculation theory is already happening in classrooms, with educators adopting the teaching approach of misconception-based learning (also known as agnotology-based learning or refutational teaching).

This involves teaching science by debunking misconceptions about the science. This approach results in significantly higher learning gains than customary lectures that simply teach the science.

While this is currently happening in a few classrooms, Massive Open Online Courses (or MOOCs) offer the opportunity to scale up this teaching approach to reach potentially hundreds of thousands of students. At the University of Queensland, we’re launching a MOOC that makes sense of climate science denial.

Our approach draws upon inoculation theory, educational research into misconception-based learning and the cognitive psychology of debunking. We explain the psychological research into why and how people deny climate science.

Having laid the framework, we examine the fallacies behind the most common climate myths. Our goal is for students to learn how to identify the techniques used to distort climate science and feel confident responding to misinformation.

A typical response of scientists to science denial is to teach more science. But that only provides half of what’s needed. Scientific research has offered us a solution: build resistance to science denial by exposing people to a weak form of science denial.

This article was originally published on The Conversation. Read the original article.

Subscribe to our newsletter

Stay up to date with DeSmog news and alerts